Google Starts New Project (CATS4ML) For Better Object Recognition

GoogleWednesday, 17 February 2021 at 05:20

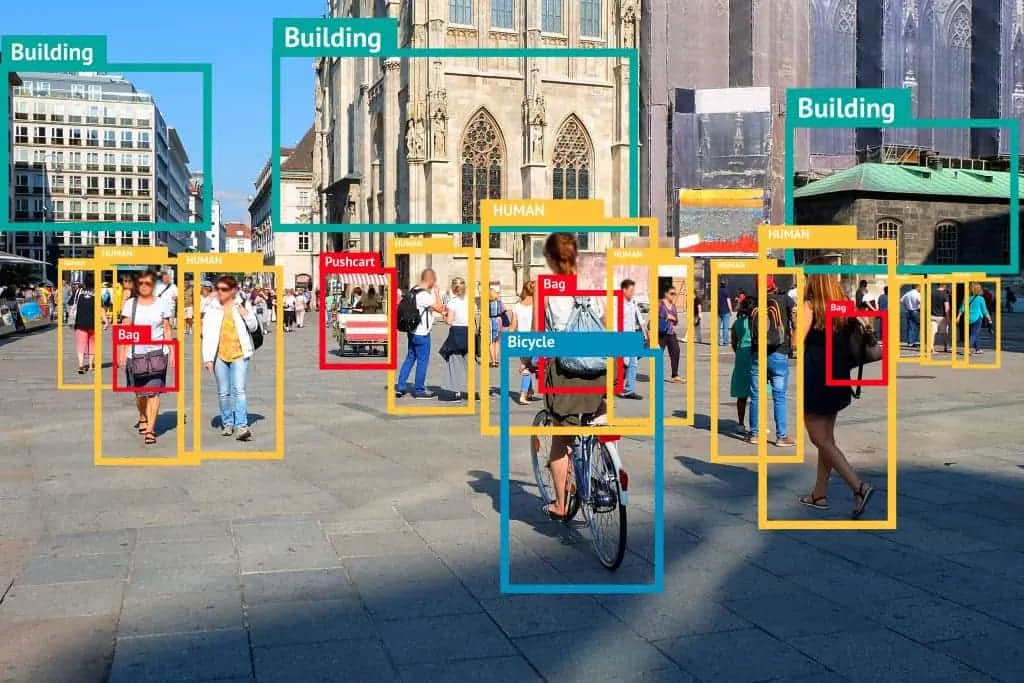

Google has launched the Crowdsourcing Adverse Test Sets for Machine Learning (CATS4ML) challenge. It requires challengers to use innovative methods to find examples of Unknown Unknowns in machine learning models. Shortly, when this technology gets more mature, Google's object recognition technology will perform better.

Also Read: Twitter Will Bring Data Analysis Tasks To Google Cloud

CATS4ML will be able to challenge the ability of machine learning in the object recognition tasks. The test set contains many examples that are difficult to handle with algorithms. And this will find that machine learning has a high degree of confidence, but classification errors. The purpose of CATS4ML is to provide a data set for developers to explore the weaknesses of the algorithm, but also to allow researchers to better create benchmark test data sets and make the data sets more balanced and diversified.

How Does CATS4ML Work?

Google mentioned that the effectiveness of a machine learning model depends on the algorithm and training and evaluation data. Although researchers have done a lot to improve the algorithm and training data in the past, the data and challenges used to specifically evaluate the model are not common. The existing evaluation data sets are too simple, and identification is not prone to discrepancies. When the lack of ambiguous examples, the effectiveness of the machine learning model cannot be truly tested, and the model may have weaknesses.

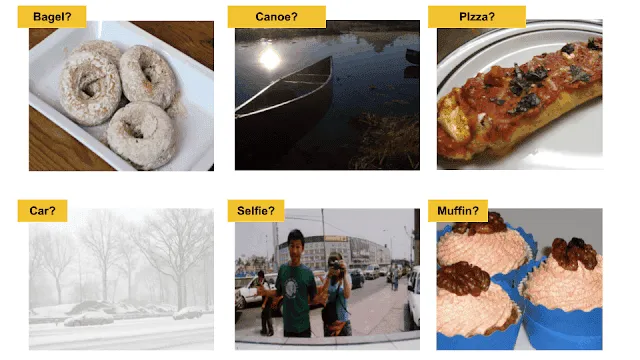

The so-called weakness is the situation where the model is difficult to accurately evaluate the classification of examples, because the evaluation data set lacks such examples. There are two weaknesses. They are divided into known unknowns and unknown unknowns. The so-called known Unknowns refer to examples where the model cannot determine the correctness of the classification. For example, it is impossible to determine whether the object in the photo is a cat or not. These are the unknown unknowns. The model is sure of the answer, but it is actually an example of misclassification.

Unknown Unknowns

The model is dealing with known unknowns. Due to lack of confidence, the system usually requires personnel to verify. Therefore, even if the judgment is wrong, people can still see things that the model does not know. But unknown unknowns are different and people usually need to take the initiative to find errors and find unexpected machine behavior.

CATS4ML will extensively collect unknown unknowns, by collecting examples that humans can reliably explain. However, the model is difficult to process. Google is currently launching the first version of the CATS4ML data challenge, which is mainly for visual recognition tasks. Therefore, using the images and tags of the developed image data set, challengers can use new and creative ways to further explore this existing public data set to find examples of unknown unknowns.

Facebook DynaBench

Some time ago, the Facebook Institute of Artificial Intelligence launched the DynaBench dynamic benchmarking platform. The purpose is to provide a more challenging method than current benchmarks to fool artificial intelligence models and better evaluate model quality. Google mentioned that CATS4ML is a supplement to DynaBench. DynaBench solves the problem of static benchmark testing by adding human participation in the testing cycle, while CATS4ML encourages exploration of existing machine learning benchmarks to find unknown unknowns to avoid possible future errors in the model.

Loading