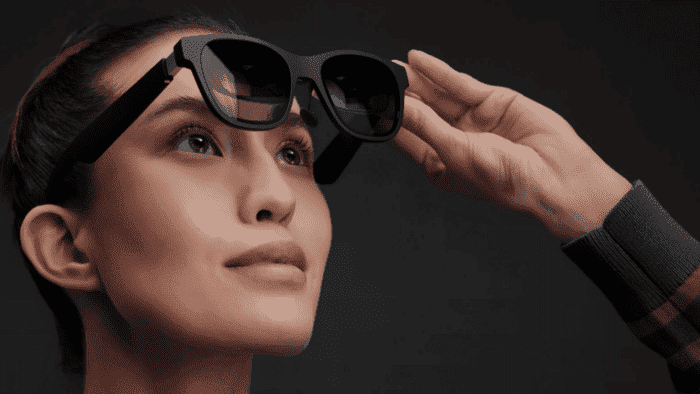

When someone with a hearing impairment wants to communicate with others, they usually use lip language and facial expressions to read the meaning of the person they are talking to. For some people with a hearing impairment, the use of hearing aids works. However, hearing aids do not work for all of them and it is also not very convenient. A British smart glasses company (XRAI Glass) has launched a product that can display real-time captions on glasses lenses.

The smart glasses are produced by an AR glasses company Nreal, and the software comes from XRAI Glass. When in use, the microphone on the glasses will capture the conversation audio. Then, it will use a wired connection to send it to the mobile phone. The subtitles will then be displayed in time through the sound and audio software on the mobile phone. On the smart glasses, the software can also identify who is speaking. In addition, it can also work when making a phone call.

Not ideal for a noisy environment

Because smart glasses rely too much on audio software, the effect is not so ideal when the environment is noisy. Furthermore, it may not also work properly when talking to many people. However, XRAI Glass President Schoff said optimistically that product development and optimization will always take some time. This is only the first generation of products, subsequent generations will come with improvements. In the future, this software will be coupled with contact lenses, and audio technology will also develop rapidly. The company said, “We are proud that this innovative technology can enrich the lives of the hearing impaired.”

“The impact of being able to talk to someone without looking at their lips is clearly life-changing,” according to users who have used the glasses.

The current price of this smart glasses is 399.99 pounds ($487). As of now, there are already 100 people with hearing impairment using these smart glasses. If all goes well, the glasses will be released in September officially.

Google hardware director: AR smart glasses are still in development

Google hardware director Rick Osterloh said in an interview a few days ago that “ambient computing” is Google’s future goal and vision. “Computing should be able to help you solve any problem seamlessly, and be right there with you,” Osterloh said in an interview on Wednesday. A few months ago, Google unveiled a slew of new product and feature updates at its 2022 Google I/O developer conference. At the end of the event, Google released new augmented reality (AR) smart glasses that support the Google Translate service.

Google has previously released Google Glass, which can connect to the Internet, but the project ultimately failed. In the 10 years since the device launched, Google has carried out a number of research projects in similar augmented reality. However, most of the hardware products are more traditional smartphones, laptops, and home smart speakers.

“We’ve learned a lot from the launch of Google Glass,” Osterloh said in an interview. “We know how hard it is to develop this technology and what users care about and what’s most important.”

Osterloh did not say when the augmented reality smart glasses will be available. Nevertheless, he confirms that Google has “a number of engineers and developers continuing to develop” the product for internal testing”. There’s still some way to go, but we’ll continue to invest heavily in augmented reality” he said.

But products such as smart glasses play a key role in Google’s “ambient computing” vision, Osterloh said. “You can see how wonderful it would be if you had a device on your face that would allow you to communicate instantly, do translations and be able to detect the world around you in real-time,” he said. This is similar to the smart glasses by XRAI Glass and Nreal.

Zuckerberg urges Meta AR glasses to launch in 2024

According to former employees in Meta’s AR smart glasses project, Mark Zuckerberg hopes to make Meta’s upcoming AR glasses his ” iPhone moment” to refresh him and the company. According to the report, Meta’s first-generation AR glasses, dubbed Nazare, will be designed to work independently, using a wireless device that offloads some of the computing.

A feature of the device is the ability for users to communicate and interact with holograms of other people. Meta intends to launch its first-generation AR glasses by 2024, targeting early adopters and developers, according to the report. That same year, the company also plans to release a pair of cheaper smart glasses with the codename Hypernova. This glass will pair with smartphones to display incoming messages and other notifications on the head-up display.

Looking ahead, Meta’s AR roadmap includes a lighter, more advanced version of the Nazare glasses due in 2026. This will be followed by a third version in 2028. If AR glasses are successful, Zuckerberg hopes they will bring new perspectives to Meta. This is also some sort of innovative path for Meta and Zuckerberg. However, Meta will have to contend with Apple, which has its own AR ambitions. Apple is working on at least two AR projects, including an augmented reality headset to be released in late 2022 or 2023, followed by a sleeker pair of augmented reality glasses.

Apple’s AR/VR headset is coming out in 2022, perhaps at the WWDC Worldwide Developers Conference in June, but Apple needs to overcome some development issues. Reliable sources such as analyst Ming-Chi Kuo and Bloomberg’s Mark Gurman say the headset could be released in 2023, while smart glasses in 2024 or 2025.

We choose how to plan, reach the target, fix some inconsistencies. The same is about software development methodologies. The team should choose what range of processes they will implement for the development of the project. In the blog, Innovecs shares not only an overall profile of the top software development models but sheds some light on how each one contributes to the project’s success.

https://innovecs.com/blog/software-development-models/