You Might Soon Be Vibing to AI-Generated Music Without Even Knowing It!

newsFriday, 16 December 2022 at 08:19

Thought AI tools are at their peak? Think again! AI-generated music is now a thing! Yes, you read that right. AI tools can now produce music with nothing but a text prompt! And the results are better than what you might have expected after reading the title.

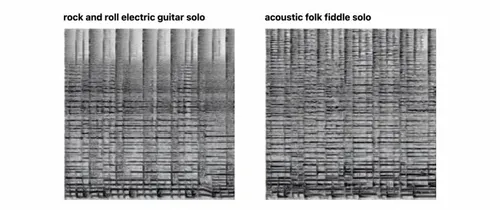

That said, it's not like AI tools can directly make music now. Instead, the music is through AI image generators, which produce spectrograms of music. You can then convert those spectrograms into audio clips. So, does this mean AI-produced music will replace human-created music in the future?

Meet Riffusion: The AI That Can Generate Music

Image-based AI trains computer algorithms to detect pictures of places and objects. After that, the algorithms are used to replicate similar but unique images. Some good examples would be DALL-E and Stable Diffusion.

At this point, you can make these programs visualize anything you want. All through text!

So, the AI tool that can make spectrograms goes by the name of Riffusion. It is the latest AI project, and at its core, it is a text-to-image generator based on Stable Diffusion. But how did it become capable of generating music?

How AI-Generated Music Came to Light

Behind Riffusion, you have the roboticist Hayk Martiros and software developer Seth Forsgren. They wanted to see if the current AI programs could work in the audio realm. And that's how the journey of Riffusion in creating music began. Forsgren tells PCMag:

Hayk and I play in a little band together, and we started the project simply because we love music. Seeing the awesome results of Stable Diffusion for image generation, we asked ourselves what it would look like to use a diffusion approach to create music.

To find out, the team of two trained the open-sourced Stable Diffusion on images of spectrograms. They were paired with text. And the program was then capable of producing spectrograms of music based on particular prompts.

At first, we didn't know if it would even be possible for the Stable Diffusion model architecture to create a spectrogram image with enough fidelity to convert into audio, but it turns out it can do that and more. At every step along the way, we've been more and more impressed by what is possible, and one idea leads to the next. - Forsgren

You Can Try Out Riffusion Now!

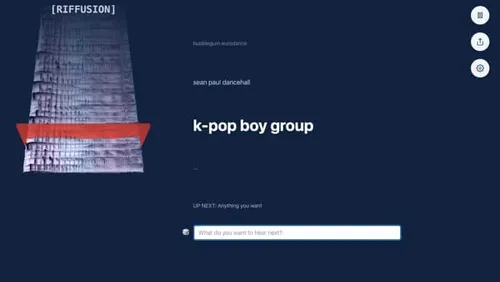

Martiros and Forsgren published their results on the official Riffusion website. It was meant to be a hobby project. But now, visitors can plug in their text prompts. That will make Riffusion produce a spectrogram. Afterward, visitors can use it as an audio clip and play it on the site.

The results, at this stage, might not be that high in quality. But it's definitely not as bad as you might have thought. The AI can even make more variations of the spectrogram as you listen. Here's an example of the AI trying to create an "Arabic gospel":

[audio mp3="https://www.gizchina.com/wp-content/uploads/images/2022/12/Arabic-gospel.mp3"][/audio]

See? The results are good! You can also get Jazzy AI-generated music. And those, too, are great. Check out this result from the prompt "funk bassline with a jazzy saxophone solo":

[audio mp3="https://www.gizchina.com/wp-content/uploads/images/2022/12/funk-bassline-with-a-jazzy-saxophone-solo.mp3"][/audio]

Riffusion can also try to replicate songs, which include Eminem-style rap and K-Pop. But the lyric-generating feature is not that great. Instead of lyrics, you will hear melodic human-sounding gibberish. But the great part is that the gibberish still matches the song's tone.

Can AI-Generated Music Replace Human-created Music?

This technology is not at a point to replace human-created music. But the project showed us AI image algorithms still have a lot of unlocked potential. It could soon be a helping hand for music creators. Maybe, to get inspiration for a song.

Loading