2023 will be known as the year of the AI rise. OpenAI might have started its revolution in late 2022 with ChatGPT-3 launch, but the race for this tech intensified only this year. Now, every single company in the tech segment needs to push toward AI. The AI-driven chatbot revamped the way we interact with the web and keeps impressing us front and back. Microsoft was quick to invest in OpenAI in exchange for its expertise. Thanks to a $10 billion investment, the company was allowed to integrate ChatGPT into Microsoft Edge, Bing, and other solutions. Thanks to this smart move, we are seeing some sort of comeback for Bing, and even Samsung is considering ditching Google Search in favor of the refreshed AI-driven Bing. Microsoft has everything to be one of the best companies in this AI revolution, and apparently, it's pushing toward this new trend.

Microsoft's "Athena" chip will pave the way for AI solutions

According to Reuters, Microsoft is developing its own AI chip with the "Athena" codename. It will power the tech behind AI chatbots like ChatGPT, Reuters cites The Information's report that comes from sources that are familiar with the matter. Worth noting, that despite Microsoft's quick investment in OpenAI, the chip development started way back in 2019. Currently, there is a small group of Microsoft and OpenAI employees working on that.

These AI chips will be used for training large-language models and supporting interference. Both techs are needed for generative AI like the one behind ChatGPT. The chatbot needs to process a huge amount of data at the same time, recognize patterns and create new outputs to deliver contextual replies that mimic the human conversation. The company wants to have an in-house chip that works much better than the chips it has to buy from vendors. Hence, it will save money and time to keep investing in AI efforts.

Worth noting, that Microsoft is not the only one to see the need for an in-house AI chip. Google and Amazon also make their own in-house chips for AI. The search giant was one of the first to promise an "AI revolution", but OpenAI came first with its ChatGPT. Now, Google is running to make its AI chatbot Bard a powerful solution to ditch ChatGPT. The company will have a hard challenge. After all, Microsoft acquired a certain advantage thanks to OpenAI.

A new competitor for NVIDIA in the AI chip segment

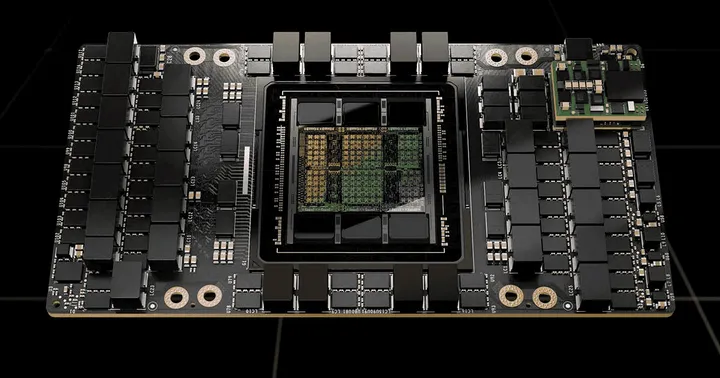

If there is a company that is benefiting from the AI race, it's NVIDIA. The giant is offering software solutions for companies to develop their own AI solutions. In addition to that, NVIDIA is the biggest chip supplier for this tech. In fact, a recent report suggests that Elon Musk's new AI venture (X.AI Corp) will also use NVIDIA chips. With the rise of Microsoft as an AI chipmaker, we may see NVIDIA facing some sort of competition.

We don't know exactly how advanced is the development of the new chips. However, Microsoft is certainly trying to accelerate the deployment. The giant is trying to capitalize on this market as much as possible and surpass Google with its Bing solutions.

OpenAI also has a lot to benefit from a possible Microsoft Chip. Right now, the costlier Nvidia chips are facing an expressive demand. As per the estimates, OpenAI will need more than 30,000 of NVIDIA's A100 GPUs for the commercialization of ChatGPT. Nvidia's latest H100 GPUs are selling for more than $40,000 on eBay. That's just a small illustration of the demand for these high-end chips that serve as the core of the new AI tech. NVIDIA is running to build as many chips as possible to fill this huge demand.

It's unclear whether Microsoft will make its AI chips available to Azure cloud customers. However, it is planning to make its AI chips available more broadly inside Microsoft and OpenAI as early as next year. Moreover, the report suggests that the giant has even a road map for the chips that include multiple future generations. They may not completely ditch Nvidia chips. But as we've said above, they will allow the company to significantly cut costs in its AI push. It will serve to reinforce in-house solutions such as Bing, Office apps, GitHub and etc.

Popular News

Latest News

Loading