Microsoft's AI Called VALL-E Needs 3 Seconds To Imitate Anyone's Voice

microsoftThursday, 12 January 2023 at 13:35

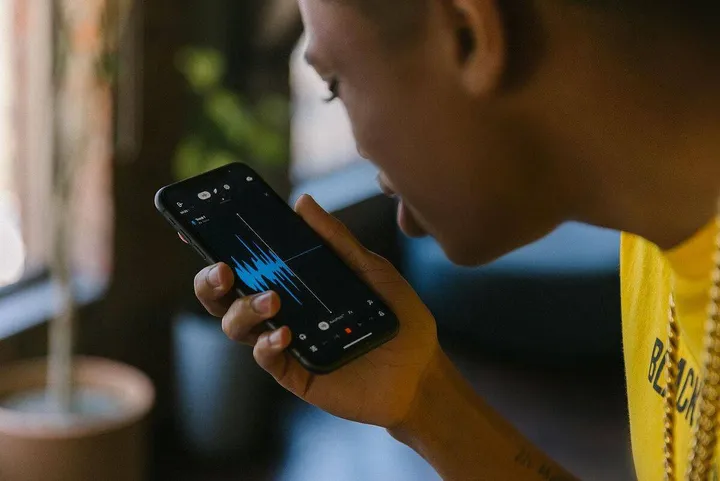

Microsoft showed AI that can imitate any human voice. It’s called VALL-E, just like the previous DALL-E algorithm. If you know, the latter creates an image based on a text.

VALL-E can imitate the timbre and manner of speech by listening to a real person's voice in just three seconds. Although the sound sounds a little like the voice of a robot, the result is still impressive.

Microsoft called it a "neural codec language model." VALL-E was built on the basis of EnCodec (an audio codec using machine learning techniques), developed by Meta a year ago, in 2022.

VALL-E imitates anyone’s voice

Other text-to-speech methods take into account waveforms. But VALL-E generates separate audio codecs from text and audio. In effect, it analyzes how a person sounds. Then, it breaks that info down into separate parts (called "tokens") via EnCodec. And in the end, it uses training data to match what it "knows" about how that voice would sound if it spoke other phrases outside of the three-second sample.

VALL-E was taught using a special library. The latter contains 60,000 hours of English speech from more than 7,000 people. The developers suggest that the method could be used for high-quality text-to-speech applications. For instance, you can use it for editing speech recordings where human words are allowed to be changed. As a result, you can create audio content (such as voiceovers for audiobooks), and more.

Of course, such a tech can also carry a certain danger. Sooner or later, “one-eyed” users will make it a blackmail tool. Say, they can use AI to prove that famous people have said something that they didn’t. There have already been such cases with deepfakes in video format.

We guess you have watched the video featuring Elon Musk, who promises huge returns to invest in a dodgy cryptocurrency.

Loading