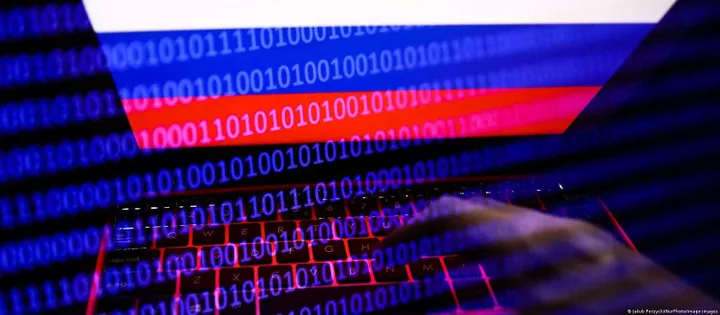

Russian Hackers Use ChatGPT For Various Cyber Crimes

artificial intelligenceWednesday, 18 January 2023 at 12:30

Hackers in Russia are trying to bypass restrictions on ChatGPT and use it for their vile goals.

Check Point Research (Israel) works in the field of IT security. Not long ago, they found many topics on under-the-table forums. Here, hackers discuss various methods of accessing ChatGPT. They include using stolen payment cards to pay for user accounts on OpenAI, bypassing restrictions, and using a "local semi-legal online SMS service".

ChatGPT is a new AI chatbot that has gained a lot of attention due to its versatility and ease of use. But it is not available in all countries of the world. Users from Russia, Belarus, China, Iran, Venezuela, and others cannot use it. Ukraine joined the list today.

ChatGPT is a finding for hackers

Cybersecurity scholars have already seen hackers use this tool to create phishing emails as well as code for malicious macros in Office files. As a reminder, a few days ago, we learned about a test by Check Point Research cyber security experts. After simple queries, the chatbot wrote codes that can be used for malware.

OpenAI imposes a number of restrictions. So hackers have to overcome even more obstacles due to the war in Ukraine. But it turns out that these obstacles are not enough.

"Bypassing OpenAI measures that restrict access to ChatGPT for certain countries is easy. Right now, we see hackers in Russia trying to bypass geofencing to use ChatGPT for their malicious goals. We believe that these hackers are trying to use ChatGPT for their criminal goals. Cybercriminals like ChatGPT because the AI tech behind it can make a hacker's job more cost-effective,” experts say.

Many hackers are trying to earn on the tool's growing popularity. For instance, there was an app in the App Store that pretended to be a chatbot. Its subscription cost was about $10 (per month). Apps like that were found on Google Play, charging up to $15 per use.

Popular News

Latest News

Loading