DeepSeek V4 Is Here: Open-Source, Huawei-Compatible, and Priced to Disrupt

aiSaturday, 25 April 2026 at 05:15

DeepSeek's V4 preview arrives more than a year after the company's R1 reasoning model rattled global tech markets — that model matched top benchmarks at a fraction of the cost of models built by the likes of OpenAI and Google, and now V4 raises the stakes again. The Hangzhou-based lab has released two variants: a high-capacity Pro and a leaner Flash. Both are open-source. Both are priced aggressively. And both are running on infrastructure that sidesteps Nvidia entirely — at least on paper.

Summary

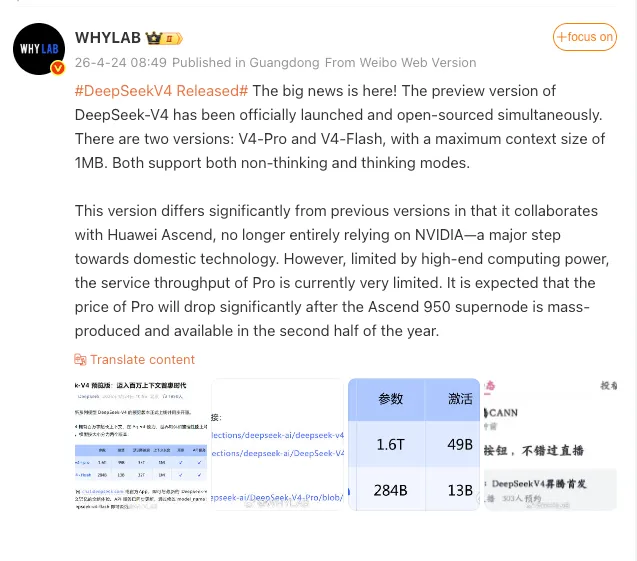

- Two models released: DeepSeek-V4-Pro (1.6 trillion parameters) and DeepSeek-V4-Flash (284 billion parameters), both with a 1 million token context window supporting thinking and non-thinking modes.

- Huawei Ascend compatibility confirmed: Huawei announced its entire Ascend supernode product line now supports V4 — though DeepSeek has not disclosed what hardware it actually used to train the model.

- Text-only for now: DeepSeek stated it is actively working on multimodal capabilities; image and video processing are not yet supported.

- Pro capacity is currently limited: DeepSeek acknowledged high-end compute constraints are restricting V4-Pro throughput at launch.

- Cheapest in class: V4-Pro is priced at $1.74/1M input tokens; V4-Flash at $0.14/1M — both currently the lowest pricing in their respective tiers.

The Models: Parameters, Context, and What "Preview" Actually Means

DeepSeek released two new models — V4-Pro and V4-Flash. The Pro version features 1.6 trillion parameters, while the Flash version is a smaller, leaner model with 284 billion parameters. Both have a context window of 1 million tokens. Both support thinking and non-thinking modes, which means developers can toggle between a faster response style and a more deliberate, chain-of-thought reasoning approach depending on the task.

The word "preview" matters here. Preview versions allow the company to incorporate real-world feedback and make changes ahead of a final product launch. DeepSeek did not provide a timeline for when the model is expected to be finalized. Frankly, that's an honest acknowledgment — and one the industry should pay attention to before treating benchmark claims as gospel.

The models can only process text for now, with DeepSeek stating it was "working on incorporating multimodal capabilities," which would allow the model to also process images and video. For teams evaluating V4 for production workloads that require image understanding, that's a meaningful gap.

Read also

The Huawei Question: Support Is Not the Same as Training

Here's where precision matters. The input text describes a clear strategic shift away from Nvidia toward Huawei. The reality is more complicated. The close collaboration with Huawei on V4 contrasts with DeepSeek's past reliance on Nvidia's chips — though the startup did not disclose which processors it used to train its latest model.

What Huawei did confirm is unambiguous on the inference side: Huawei announced that its Ascend supernode built on Ascend 950 AI chips will fully support DeepSeek's V4 preview, signaling a shift from DeepSeek's earlier reliance on Nvidia hardware toward tighter integration with Chinese chip vendors. Whether V4 was actually trained on Ascend silicon — rather than Nvidia hardware acquired before export controls tightened — remains an open question that DeepSeek has deliberately left unanswered.

The Pricing: Where the Real Disruption Sits

I suppose the benchmark numbers will generate the headlines. But the pricing is where the story actually lands for developers and enterprises. V4-Pro costs $1.74/1M input tokens and $3.48/1M output tokens, while V4-Flash costs $0.14/1M input and $0.28/1M output — both currently the cheapest in their class. That's not a marginal undercut. That's a structural price difference that forces competitors to respond.

Read also

Capacity Constraints and What Comes Next

DeepSeek acknowledged that due to constraints in high-end compute capacity, the current service capacity for V4-Pro is very limited. That's a candid admission, and one that reflects the real-world bottleneck of building frontier AI on domestic Chinese silicon under ongoing US export restrictions. Huawei's Ascend 950-based supernode environment is committed as a supported inference target, with vendor tooling and runtime optimizations expected to follow. As that hardware scales, the expectation is that throughput expands and pricing drops further — though no specific timeline has been confirmed.

After DeepSeek announced the V4 release, shares of Chinese contract chip manufacturers rose in Hong Kong, with SMIC and Hua Hong Semiconductor surging sharply. The market, at least, has drawn its own conclusions about what a Huawei-compatible frontier model means for the domestic chip ecosystem.

Loading