Apple and University of Wisconsin-Madison Introduce RubiCap, a New AI Training Framework

AppleThursday, 26 March 2026 at 02:41

A new report from 9to5Mac reveals that Apple, working alongside the University of Wisconsin-Madison, has introduced a new AI training framework called RubiCap. The system is designed to improve how models learn “dense image descriptions.”

RubiCap Arrives as Apple's New AI Training Framework

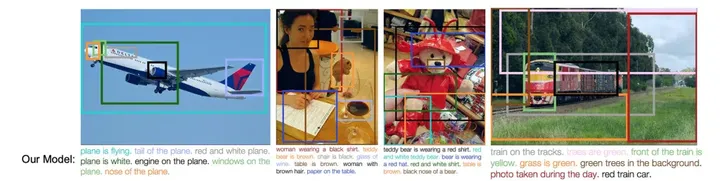

Apple is pushing forward in AI training with RubiCap, a new framework built to improve how models understand and describe images in detail. Instead of providing a single, general description, RubiCap focuses on a technique known as dense image captioning. That means breaking an image into smaller parts and describing each one clearly. So rather than just saying “a table with food,” it can point out details like “a red apple on the table” or “people walking in the background.” The result feels far more precise and useful.

This kind of detail matters. It plays a big role in training visual AI systems, improving text-to-image tools, and enhancing accessibility for people who rely on accurate descriptions. Training these models, however, has always been a challenge. Manual labeling takes time and effort, while AI-generated data often lacks variety and struggles to handle new scenarios. Apple’s answer is a different approach.

Key Points (TL:DR)

- RubiCap is Apple’s new framework for training AI on detailed image descriptions

- Focuses on dense image captioning (describing multiple parts of an image, not just one)

- Uses reinforcement learning instead of relying only on manual or synthetic data

- Combines models like GPT-5, Gemini 2.5 Pro, and Qwen2.5 in a structured workflow

- Trained on ~50,000 images with multi-step evaluation and scoring

- 7B model outperformed much larger 72B models in testing

- 3B model even beats the 7B version in some scenarios

- Shows that training quality can matter more than model size

With RubiCap, the company leans on reinforcement learning. The process starts with a dataset of around 50,000 images. Advanced models like GPT-5 and Gemini 2.5 Pro generate multiple description options. Then, Gemini steps in again to review those results, identify what’s missing, and turn that into clear scoring guidelines. Finally, Qwen2.5 acts as a judge, scoring each output and giving structured feedback so the system can improve over time.

Read also

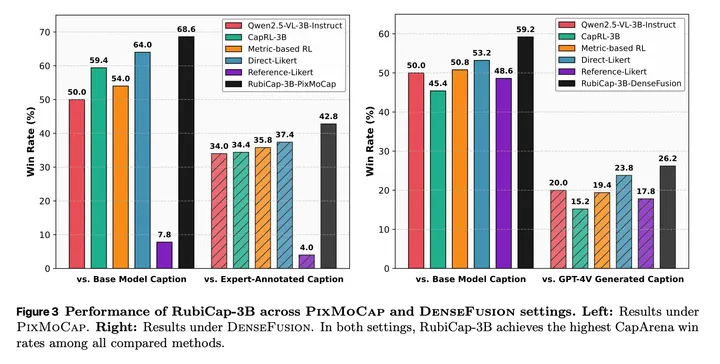

The results are surprisingly strong. Apple trained three models with 2B, 3B, and 7B parameters, and even the smaller ones perform at a high level. The 7B model stood out in blind tests, delivering fewer hallucination errors and outperforming models many times its size, including some with up to 72B parameters.

Even more interesting, the 3B model outperformed the 7B version in certain cases. It’s a clear sign that better training methods can outweigh raw scale. In short, smarter training is starting to beat bigger models.

In related news, Apple scheduled its WWDC 2026. We expect the company to share more details about its AI take.

Popular News

Latest News

Loading