Google TPU Chips Reach Its 8th Generation With Separate Chips For Training And Inference

GoogleFriday, 24 April 2026 at 05:22

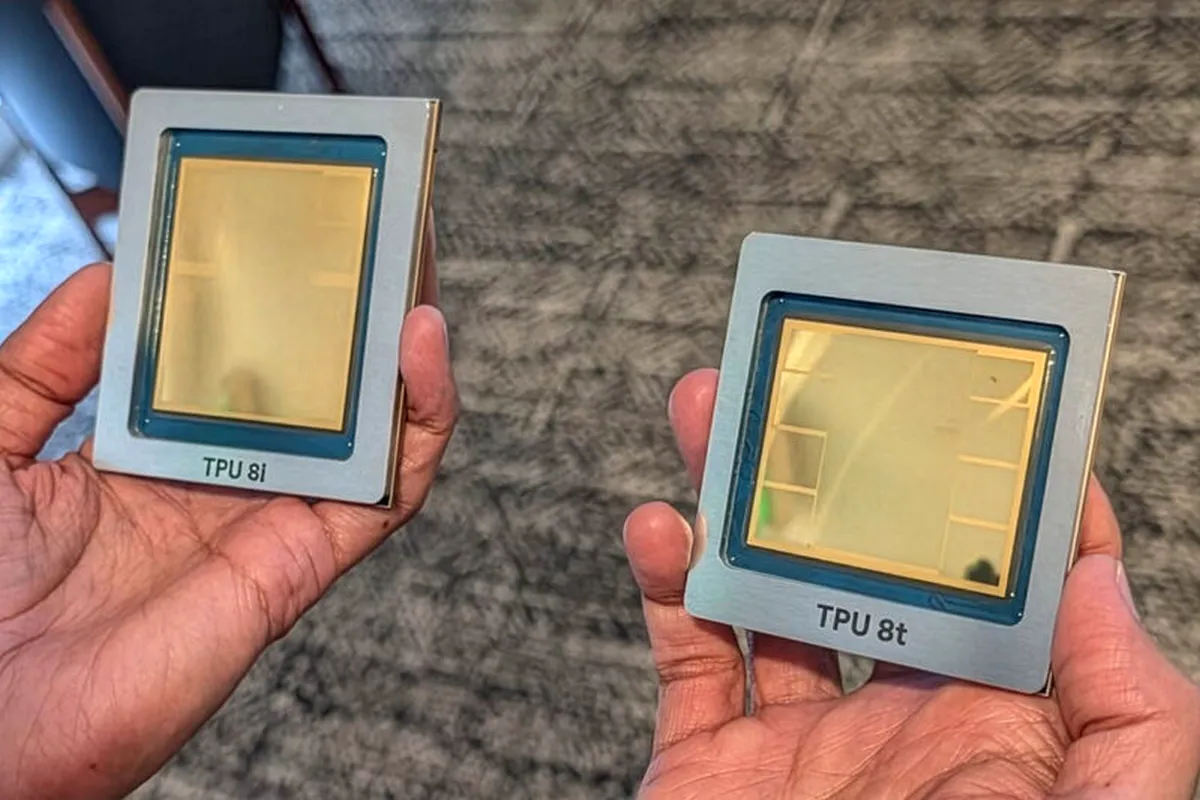

The Google Cloud Next stage served to unveil the eighth generation of the Google TPU chips, and this time, the approach is different. Instead of one design handling everything, the search giant now separates training and inference into two dedicated chips.

This is a significant change that leads to two models. The Google TPU 8t is built for training large AI models, while the Google TPU 8i focuses on running those models efficiently. It is the first time Google has split these workloads at the hardware level, which signals a more focused and scalable direction for its AI infrastructure.

Google TPU 8t Targets Raw Training Power

The TPU 8t was developed with Broadcom and aims straight at heavy training tasks. Each chip packs 216 GB of HBM memory, 6.5 TB/s bandwidth, and 128 MB of SRAM. Compute performance reaches 12.6 petaFLOPS in FP4.

Scaling is where it stands out. A single pod can link up to 9,600 chips, reaching 121 exaflops of FP4 compute and 2 PB of shared memory. Compared to the earlier Ironwood TPU, performance is about 3 times higher, with much better value for the dollar.

The system also leans on optical switching and advanced networking to scale past one million chips in a cluster. Storage is handled by a managed Lustre system that pushes up to 10 TB/s throughput.

Google TPU 8i focuses on efficient inference

For inference, Google teamed up with MediaTek to build the TPU 8i. This version increases memory to 288 GB HBM and boosts bandwidth to 8.6 TB/s, along with 384 MB of SRAM. The Compute powers reach 10.1 petaFLOPS in FP4, and a full pod can scale to 1,152 chips, delivering 11.6 exaflops in FP8. Compared to Ironwood, it delivers about 80 percent higher performance at the same cost and more than double the efficiency per watt.

The Networking also improves. The Boardfly topology cuts down communication steps, while the Collection Acceleration Engine reduces latency significantly, which helps a lot with Mixture of Experts models.

Read also

Both TPU 8t and 8i are built on TSMC 2nm process, use Google’s custom Arm-based Axion CPU, and rely on fourth-generation liquid cooling. They are expected to land on Google Cloud later this year, with full-scale production planned for next year. The current support includes JAX, PyTorch, Keras, and vLLM.

We are curious to see the effects of this new strategy, but considering the current race in the AI scene and Google's efforts in this area, we may see the first results in the coming months.

Popular News

Latest News

Loading